Nvidia calls time on native-res gaming, says DLSS is more 'real' than raster

"Raster is a bag of fakeness."

Kiss goodbye to native-res raster rendering. The biggest noise in PC graphics, Nvidia, thinks it's game over. The future is—it has to be—AI rendering. In fact, AI rendering is more "real" than traditional raster graphics rendering.

In a Digital Foundry round table discussion on Nvidia's new DLSS 3.5 Ray Reconstruction technology, Nvidia's Vice President Applied Deep Learning Research Bryan Catanzaro laid out Nvidia's thinking.

Catanzaro responded to the comparison between Big Hero 6, said be the first CGI movie to use path tracing throughout, and the similar if not entirely comparable technology in Cyberpunk 2077, observing how remarkable it is to see it running in real time at 4K.

"Moore's Law is dead. We don't know as a civilisation how to keep turning the crank on traditional ways of doing things. We have to be smarter," Catanzaro says.

"You fundamentally realise you have to be more intelligent about the graphics rendering process. Brute force—let's re-render every frame 120 times a second at 2160p output—that is wasteful because we know that there are a lot of correlations in the output of any rendering process.

We know that there are a lot of opportunities to be smarter, to reuse compute. And then deliver transformational image quality benefits, things like Cyberpunk, that we could never have imagined doing before."

Catanzaro's overarching point isn't just that DLSS as an umbrella technology helps with performance. It's that DLSS makes graphics more realistic, for instance because it's so AI adept at de-noising ray-traced visuals.

Comic deals, prizes and latest news

Sign up to get the best content of the week, and great gaming deals, as picked by the editors.

"DLSS 3.5 makes Cyberpunk even more beautiful than native rendering. The reason for that is because the AI is able make smarter decisions about how to render the scene than what we knew without AI. I would say that Cyberpunk frames using DLSS and Frame Generation are much realer than traditional graphics frames," he explains.

Describing all the kludges and tricks used by traditional raster rendering to simulate lighting and reflections, Catanzaro says he's happy to see it go. "Raster is a bag of fakeness. We get to throw that out and start doing path tracing and actually get real shadows and real reflections. And the only way we do that is by synthesising a lot of pixels with AI."

Slightly facetiously and with a twinkle in his eye, Catanzaro says he is now in the habit of calling native rendering "fake frames". That's obviously a nod to references from some quarters to Nvidia's Frame Generation technology as fake frames.

Listening to Catanzaro propound the virtues of DLSS, it's hard not to be won over. Particularly when it comes to ray tracing and path tracing, which in conventional terms is incredibly computationally intensive, Ray Reconstruction does indeed seem like something of a game changer.

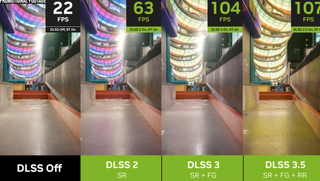

For sure, it's remarkable how rapidly Nvidia is iterating DLSS. The 1.0 version was a bit of a train wreck. DLSS 2.0 did a far better job of the basic upscaling job. Then DLSS 3 introduced Frame Generation and now we have Ray Reconstruction. It's all happening so fast.

Despite all that, it's still hard to be completely comfortable with the idea that DLSS rendering is, in effect, the baseline. For starters, that makes comparing GPU performance between vendors almost impossible. With raster rendering or even what you might call "conventional" ray tracing, you can directly compare AMD and Nvidia GPUs outputting essentially the same visual quality.

But an Nvidia GPU running Ray Reconstruction actually outputs different visuals to any GPU that does not support the feature. So, how then do you compare Nvidia hardware to AMD hardware?

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

Moreover, for Nvidia's competitors in the gaming graphics space, this is all a bit of a nightmare. AMD has consistently been a step or three behind DLSS with its FSR technology. First it played catch up with upscaling. More recently, it has been belatedly trying to close the gap on Frame Generation with its Fluid Motion Frames features in FSR 3, which still isn't actually available.

But should we now expect AMD to respond to Ray Reconstruction and DLSS 3.5? Is that even realistic given how far AMD is behind on "conventional" ray tracing performance.

There's plenty to unpick here. But if we had to guess, we'd say Nvidia's AI-powered vision of the future of game rendering looks likely to prevail over any competitor planning to compete mainly by producing more powerful hardware.

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.

Most Popular